Paper Pursuit #6 - MapReduce: Simplified Data Processing on Large Clusters

Authors: Jeffrey Dean and Sanjay Ghemawat (Google) [2004]

Original paper: MapReduce: Simplified Data Processing on Large Clusters

(Image reference: Elephant is a common mascot for technologies involving large data sets)

Introduction

This week we have another important paper from Google that presents a functional model for processing large-scale data sets. MapReduce applies the fundamental models in functional programming, inspired by Lisp’s map and reduce functions, in a very large, distributed setup.

Programming Model

If you’ve used functional programming paradigms before, you might know what map and reduce operations are. Functional programming revolves a lot around keeping the state (a.k.a. context or data) out of your code and hence you write programs composed of pure functions.

Pure functions are your regular functions that do not have any side effects like updating/reading from a global state, reading from a database/API etc. They accept some inputs and produce some outputs. Pure functions produce consistent output, i.e. for a given input the function always produces the same output irrespective of when, where or how it is called.

// Impure - modifies external state

function concat(arr1, arr2) {

arr1.push(...arr2);

return arr1;

}

// Pure - only reads arguments

function concat(arr1, arr2) {

return [...arr1, ...arr2];

}MapReduce operates on a model of splitting a data set into smaller units and mapping them to a map function. The map function processes a unit and outputs a list of intermediate key-value pairs. A reduce function then combines the outputs from all map functions for the dataset and outputs a single output.

Both these functions are implemented by the user, the MapReduce environment executes and manages the infrastructure. So in the end the user simply has to write two methods. A simple word counter for a set of documents might look like this:

map(key, value) {

// key = document, value = contents

for each word in document:

EmitIntermediate(word, 1)

}

reduce(key, values) {

// key = word, values = list of intermdeiate word counts

// emitted by map

let count = 0;

for each v in values:

count += v

Emit(count)

}The final value emitted by the setup is a set of key-value pairs for each word and its corresponding count in the whole set of documents. A more detailed example can be found here.

Examples

The paper provides several examples of use cases where a map-reduce model would work the best. Some of these include:

Distributed Grep: A MapReduce implementation can grep across multiple files where map emits a line containing a pattern and reduce simply collates these results.

Reverse Web-Link Graph: To construct a dictionary of URL targets mapped to a list of sources to the target. map emits <target, source> when it processes the source document and finds a target URL. Then reduce collates all target values emitted to construct a list of sources.

Benefits of the functional model

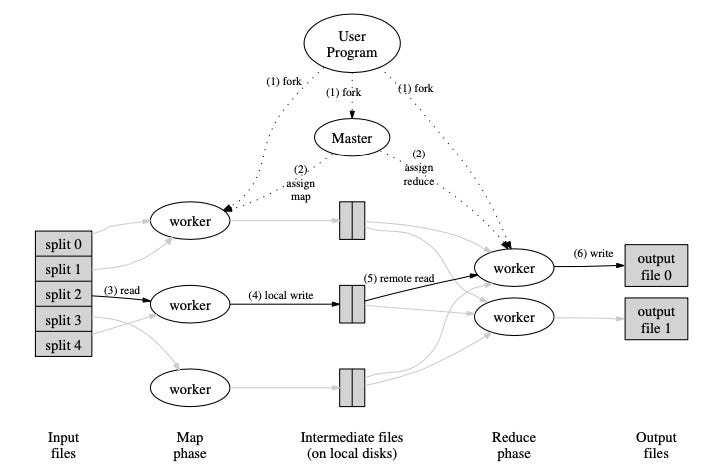

Since the map and reduce functions are pure, the model can be scaled and parallelized very well. Parallelization is often difficult when there is a shared state involved, luckily this is non-existent in MapReduce. Google operates MapReduce across a large cluster of machines where each machine operates either as a map or reduce worker with a single master controlling the operations.

Google uses a distributed file system (DFS) to store emitted objects from map workers and for reduce workers to read.

Takeaways and further readings

MapReduce is a great model to apply while processing large sets of data. Hadoop is a popular implementation of the map-reduce model and it provides APIs to implement the methods. Today, Hadoop is less frequently used as the alternative Apache Spark is favoured for large-scale data processing. This is mainly because Spark is much faster and easier to use than Hadoop.

Spark uses the MapReduce paradigm but instead of separating the map and reduce steps on different machines, it operates this as a combined step in memory on a single machine. It also provides client libraries in various languages, unlike Hadoop which was only available in Java.

When in use, MapReduce was used to regenerate the Google Search index completely and greatly benefited the company in several large-scale data processing tasks. It proved that restricting to a programming model can enable the implementation of parallel and distributed algorithms at scale.

Relevant readings:

Spark: Cluster Computing with Working Sets, Matei Zaharia et al, University of California, Berkeley

Did you enjoy reading today’s issue? I write here every Tuesday! If you found this interesting, share it with someone who would like to read such content. I’ll see you next week! 💛